Not All AI is Built to Diagnose

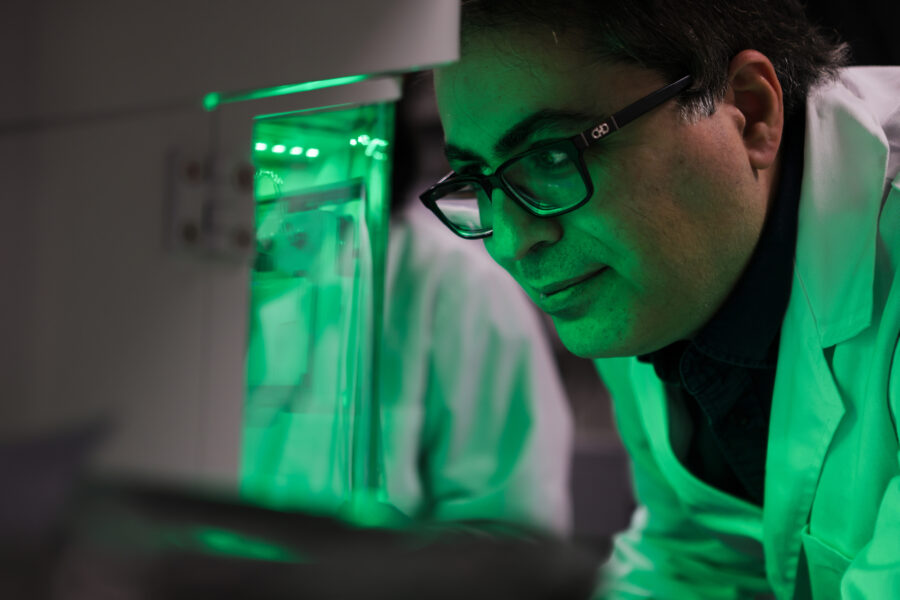

Pictured: Milan Toma

Artificial intelligence (AI) is rapidly transforming healthcare. AI systems can now detect diabetic eye disease from retinal photos and analyze CT images for signs of early-stage lung cancers and stroke.

Right now, at hospitals across the country and throughout the world, specialized algorithms are quietly assisting physicians, prioritizing urgent scans and flagging subtle irregularities that might otherwise go unnoticed. These specialized AI tools—often trained on millions of precisely categorized medical images—are increasingly integrated into real clinical practice.

At the same time, another form of AI has captured the public’s attention: large language models (LLMs). These widely accessible systems, such as ChatGPT and Claude, can analyze both text and images. In theory, these capabilities should make them well-suited for medical tasks, but are general-use AI platforms reliable when it comes to medical diagnosis?

A new study led by College of Osteopathic Medicine (NYITCOM) Associate Professor Milan Toma, Ph.D., suggests otherwise. As seen in the scholarly journal Algorithms, Toma and his co-authors, which include NYITCOM Senior Development Security Operations Engineer Mihir Matalia and medical student Sungjoon Hong, tested the reliability of some of the world’s most advanced multimodal LLMS (GPT-5, Gemini 3 Pro, Llama 4 Maverick, Grok4, and Claude Opus 4.5 Extended).

The researchers provided each AI model with the same CT brain scan showing clear intracranial pathology. Then, they asked the models to analyze the image like a radiologist—identifying the imaging technique used, the location of the pathology in the brain, primary diagnosis, key features, and potential alternative diagnoses. Overall, the findings revealed a 20 percentrate of fundamental diagnostic error across the AI models, along with concerning variabilities in interpretation and assessment.

At first, the models produced promising results, with all five correctly identifying the image as a CT brain scan. Four models also detected a key finding: an ischemic stroke near the left middle cerebral artery. However, one made a fundamental error by incorrectly misclassifying the stroke as a hemorrhage on the opposite side of the brain. In a real, clinical setting, this error could significantly impact a patient’s health, as ischemic strokes and hemorrhagic strokes require different treatments.

Even among the four AI models that reached the correct diagnosis, their explanations differed greatly. Some offered varying interpretations on when the stroke first occurred; others disagreed on alternative diagnoses and additional brain regions affected, as well as calcification. The researchers then introduced a novel surprise: They asked each AI model to grade the others’ diagnostic explanations. This cross-evaluation exposed additional inconsistencies, with some models grading more harshly than others. One model even believed the findings showed chronic brain abnormalities rather than an acute stroke and, as such, systematically penalized the others’ responses.

In recent years, Toma has published more than 30 peer-reviewed studies on AI in medical diagnostics and healthcare, as well as two books on the topic.

“Our research highlights a critical distinction in the AI landscape. Most successful medical AI tools are task-specific algorithms, trained on large datasets of labeled medical images and validated for very specific diagnostic tasks,” says Toma. “However, large language models are not optimized for diagnostics—they are built for linguistics and conversation. Accordingly, they generate explanations that sound authoritative, even when their underlying interpretation is wrong or inconsistent.”

Toma and his co-authors concluded that the future of healthcare AI will likely combine both specialized diagnostic systems and language models. However, while LLMs may be useful for clinical documentation, summarizing reports, or communicating with patients, oversight from a medical expert remains a non-negotiable for all diagnostic interpretations.

More News

Promoting Early Engagement in Research

New York Tech recently completed the ninth year of its Mini-Research Grants Awards program to encourage high school students to pursue STEM fields.

Reversing Bone Loss After Spinal Cord Injury

People with spinal cord injury may lose up to 41 percent of their bone mass in the first year. A new study by the College of Arts and Sciences’ Hesham Tawfeek, MBBCh, seeks to repair this damage.

Uncovering the Body’s Fat-Burning Strategy—It’s Math-Driven!

A new study by an NYITCOM-Arkansas researcher finds that the body calculates which fat to burn, choosing those that produce the most usable energy while consuming the least oxygen.

When Rehab Meets Robotics

A study co-authored by John P. Handrakis, D.P.T., Ed.D., and graduates of the physical therapy program finds that a wearable robotic device could help stroke survivors get back on their feet.

Realistic 3-D Colon Model Shifts Paradigm for Drug Development

Assistant Professor Steven Zanganeh, Ph.D., is striving to further improve the model he developed to open the door to drug development for cancer and other conditions.

Beyond the Human Machine

Biology student Justin Tin seeks to understand what’s running “under the hood” in the human body so he can someday help prevent patients from suffering physiological changes.